I asked Siri to reschedule my Thursday call last week. She read me my calendar. That’s it. I still had to open the app, find the event, tap edit, pick a new time, and send the update myself. The whole interaction saved me about four seconds and cost me ten.

A personal agent is an AI that knows your context, calls tools to act on your behalf, and improves every time you use it. An AI assistant answers commands inside one ecosystem. Both use AI. But the relationship between you and the software is fundamentally different, and that difference determines whether the AI actually frees up your time or just narrates what you’re already doing.

What is an AI assistant?

Picture your phone buzzing at 6:45 AM with a commute alert you didn’t ask for. That’s Google Assistant being proactive. Now picture asking it to pull last quarter’s revenue from your Notion workspace. Silence.

An AI assistant is software that responds to voice or text commands within a specific platform ecosystem. Siri, Google Assistant, and Alexa are the canonical examples. They handle quick, one-shot tasks: setting timers, reading weather, controlling smart lights, sending dictated texts.

They’re genuinely good at this. Google Assistant answers factual questions with near-perfect accuracy. Siri integrates deeply enough with iOS that it can toggle your Do Not Disturb, find a photo from last Tuesday, and queue up your gym playlist without you touching the screen. Alexa moved $25 billion in commerce through voice commands in 2024, according to Amazon’s annual report.

But three constraints define every AI assistant on the market:

They wait. Between activations, nothing happens. An AI assistant sits in silence until you speak. It doesn’t notice that your 3pm meeting conflicts with a dentist appointment you booked last week.

They forget. Ask Siri the same question three days in a row and it gives you the same answer each time, with no awareness that you’ve asked before. There’s a thin layer of preferences (your name, home address, preferred units) and not much else.

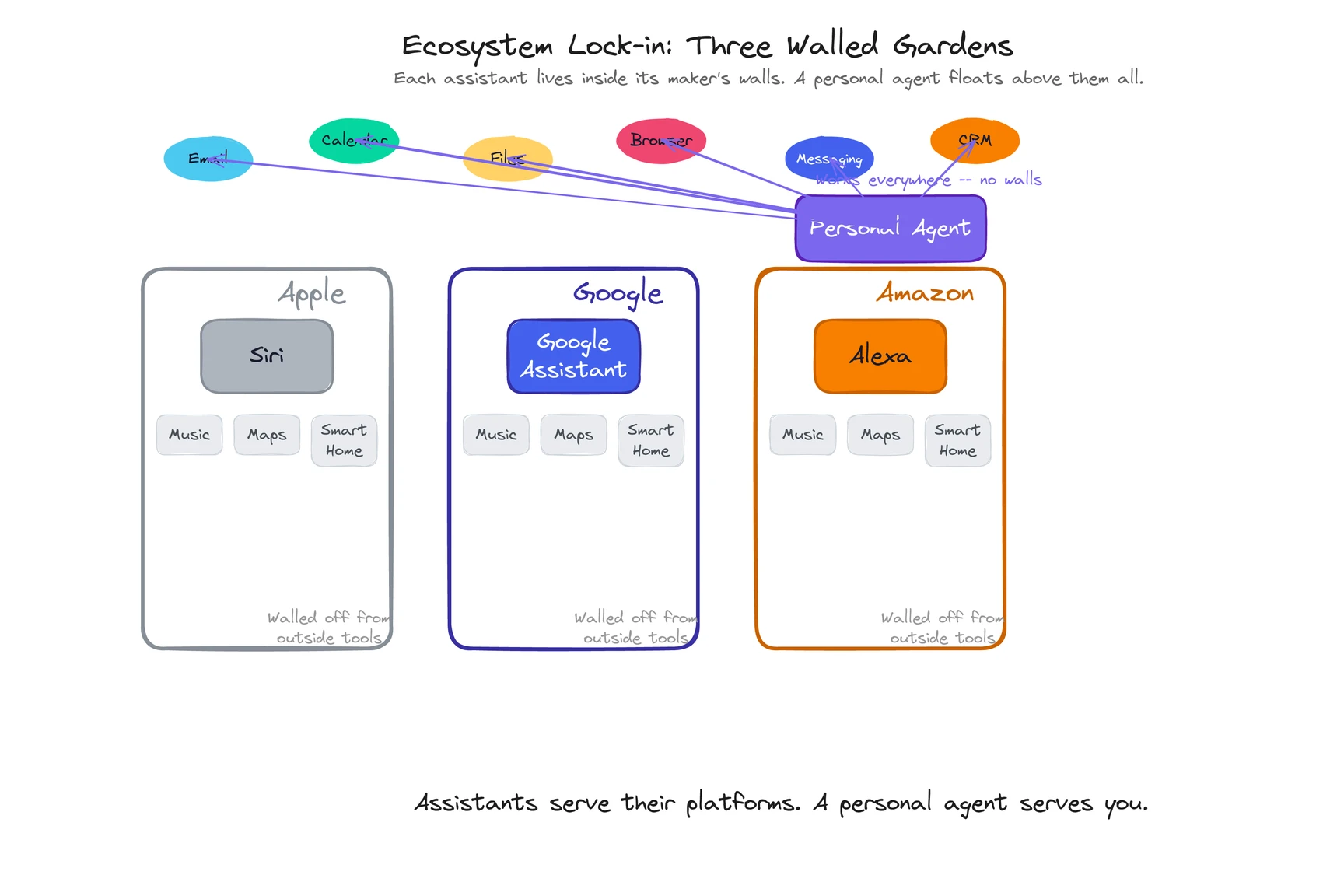

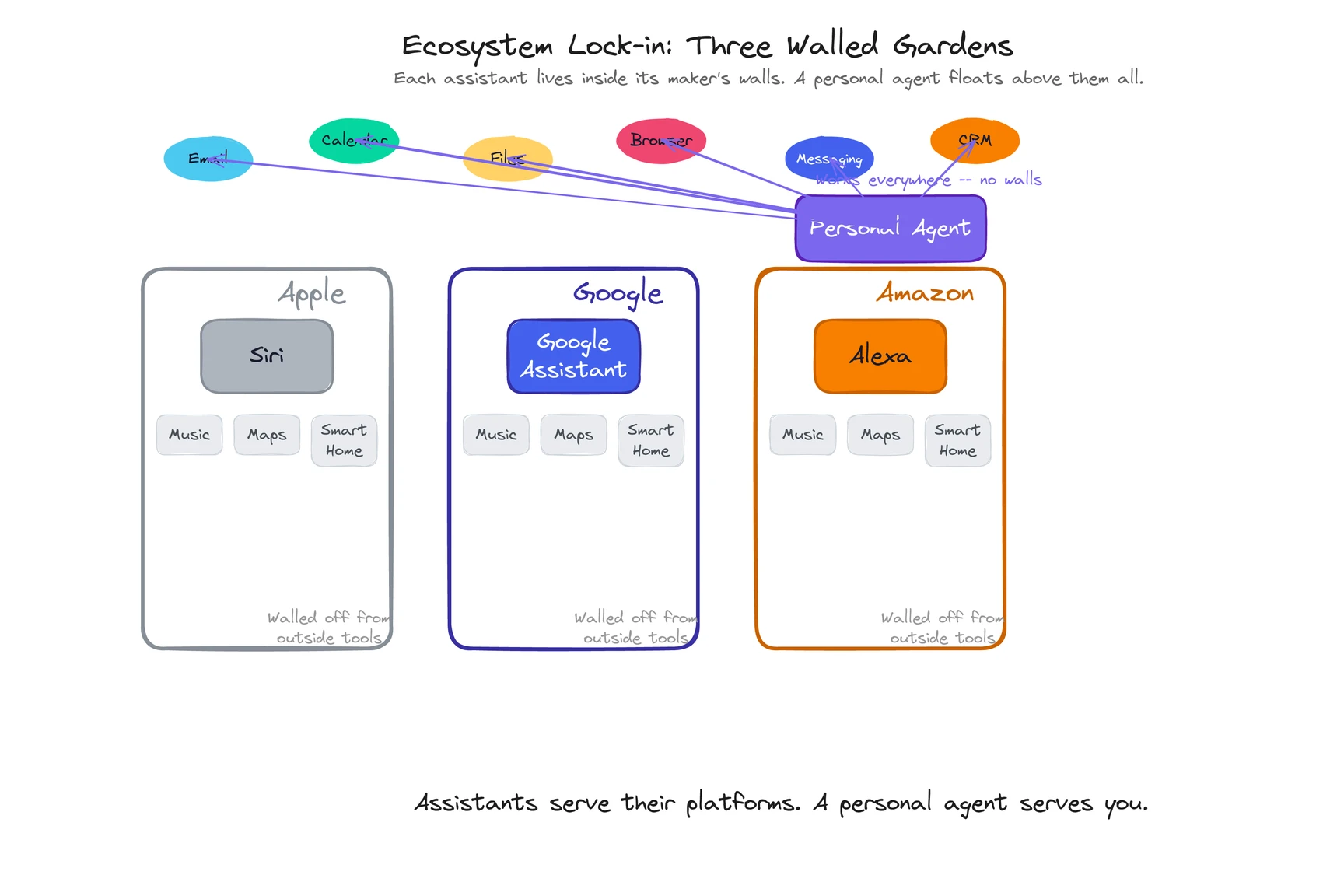

They’re fenced in. Siri lives inside Apple. Google Assistant lives inside Google. Alexa lives inside Amazon. Each one is excellent within its own world and nearly blind outside it. Ask Siri to pull data from your Salesforce dashboard and it doesn’t know what you’re talking about. The assistant understands its ecosystem. It doesn’t understand your work.

These aren’t bugs. They’re architecture decisions. Apple built Siri to make iPhones easier to use, not to manage your workday across twelve SaaS tools.

What is a personal agent?

If AI assistants are remote controls for your devices, a personal agent is closer to a new hire who showed up already knowing your projects, your communication style, and which meetings you secretly wish would get canceled.

We wrote a full breakdown in What Is a Personal Agent?, but the short version: a personal agent is an AI that maintains persistent knowledge about you across sessions, takes autonomous action across your real digital tools (not just one ecosystem), and gets better the longer you use it. It manages email, calendar, files, browser tasks, and communication without waiting for step-by-step instructions.

The practical difference shows up in moments like this: you get an email from a prospect mentioning a document you shared last month. An AI assistant can read you the email. A personal agent reads the email, finds the document, checks your CRM for the prospect’s history, and drafts a reply referencing the specific metrics they mentioned. One AI has access to your inbox. The other has access to your work.

How are personal agents and AI assistants different?

I’ve been tracking how people describe the difference, and most explanations overcomplicate it. Seven dimensions cover it.

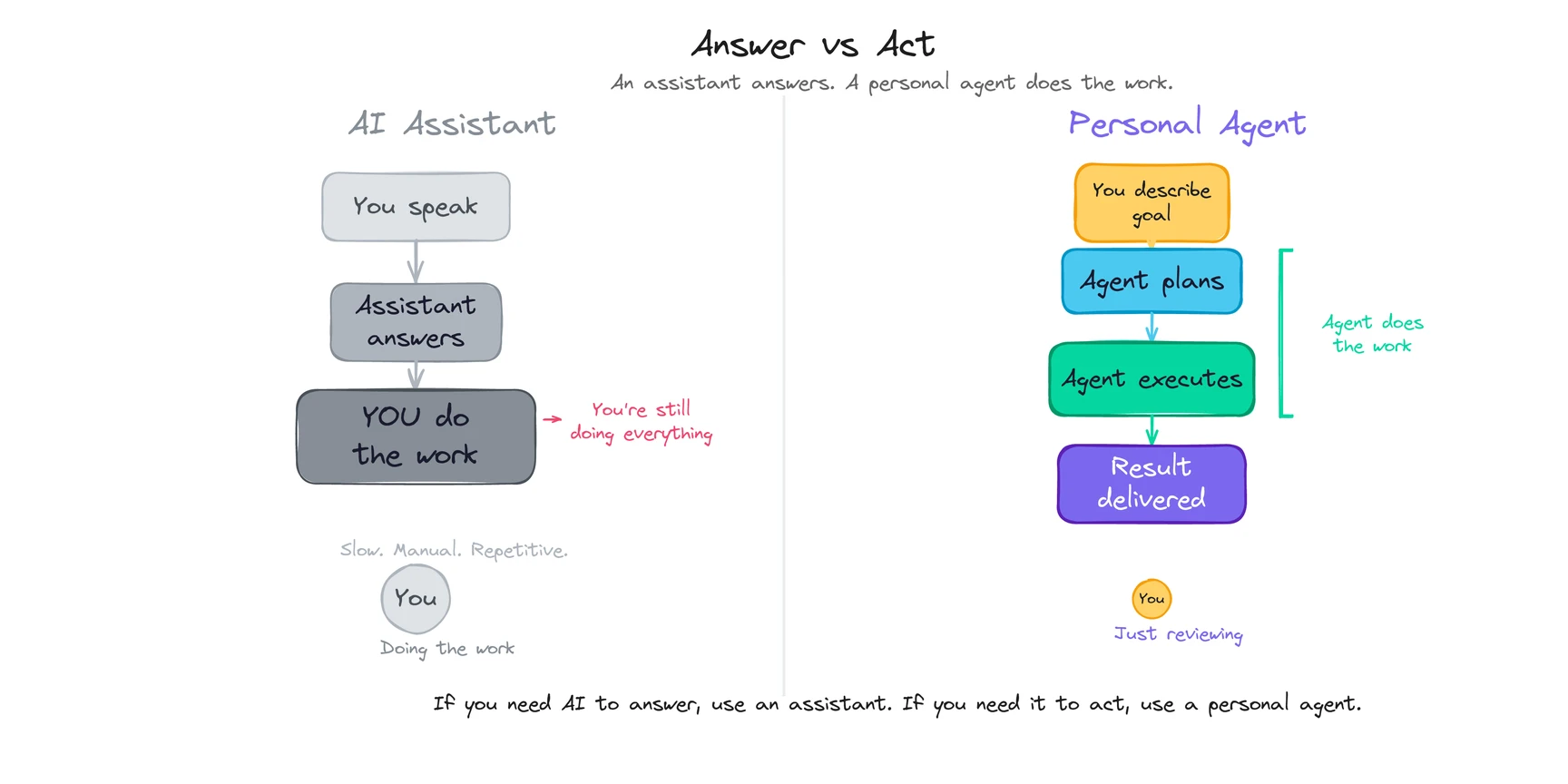

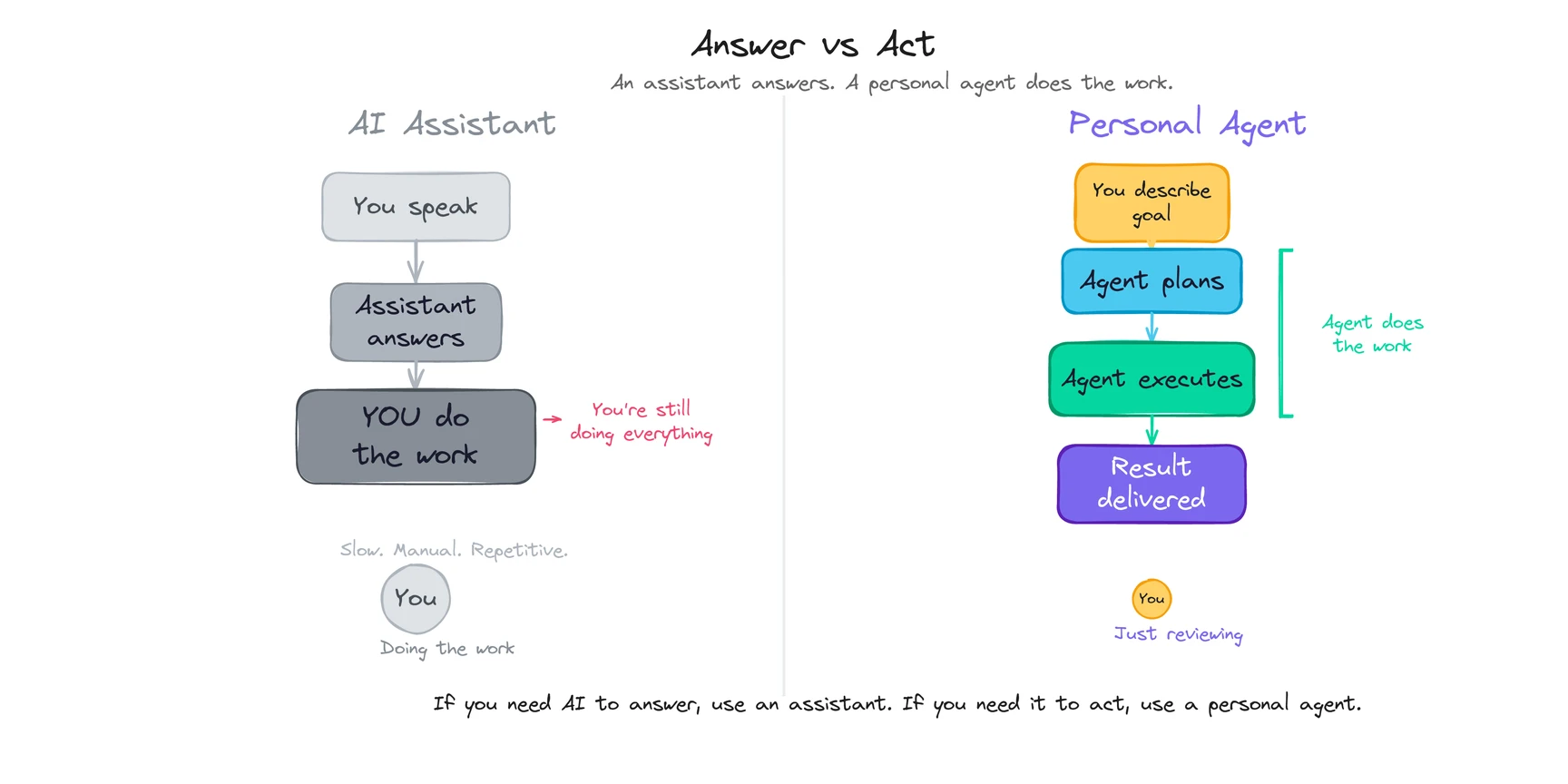

| Dimension | AI Assistant | Personal Agent |

|---|

| Primary function | Answers questions and executes simple commands within an ecosystem | Takes autonomous action across your digital life based on context and goals |

| Who does the work? | You, after getting information | The agent executes; you review when needed |

| Memory | Minimal — basic preferences, no cross-session learning | Persistent across sessions — what you said, how you behave, what it’s learned, what you’ve corrected |

| Scope | Single ecosystem (Apple, Google, Amazon) | Cross-platform — email, calendar, files, browser, desktop, messaging |

| Proactive? | Rare and pre-programmed (weather alerts, commute times) | Yes — monitors, anticipates, acts on patterns it’s learned about you |

| Real-world action | Limited — timers, dictated messages, smart device control | Yes — sends emails, books meetings, manages files, conducts research, automates workflows |

| Examples | Siri, Google Assistant, Alexa | ego, Manus, Lindy |

The table is useful. But the real separation comes down to two gaps: memory and scope. Everything else follows from those.

Why does the memory gap matter so much?

This is the gap that creates all the others.

I used Google Assistant daily for about a year. It never learned that I take calls only after 10 AM. It never picked up that I respond to my CEO within minutes but let vendor emails sit for days. Every interaction felt like the first one. (I realize I’m complaining about free software, but the point stands.)

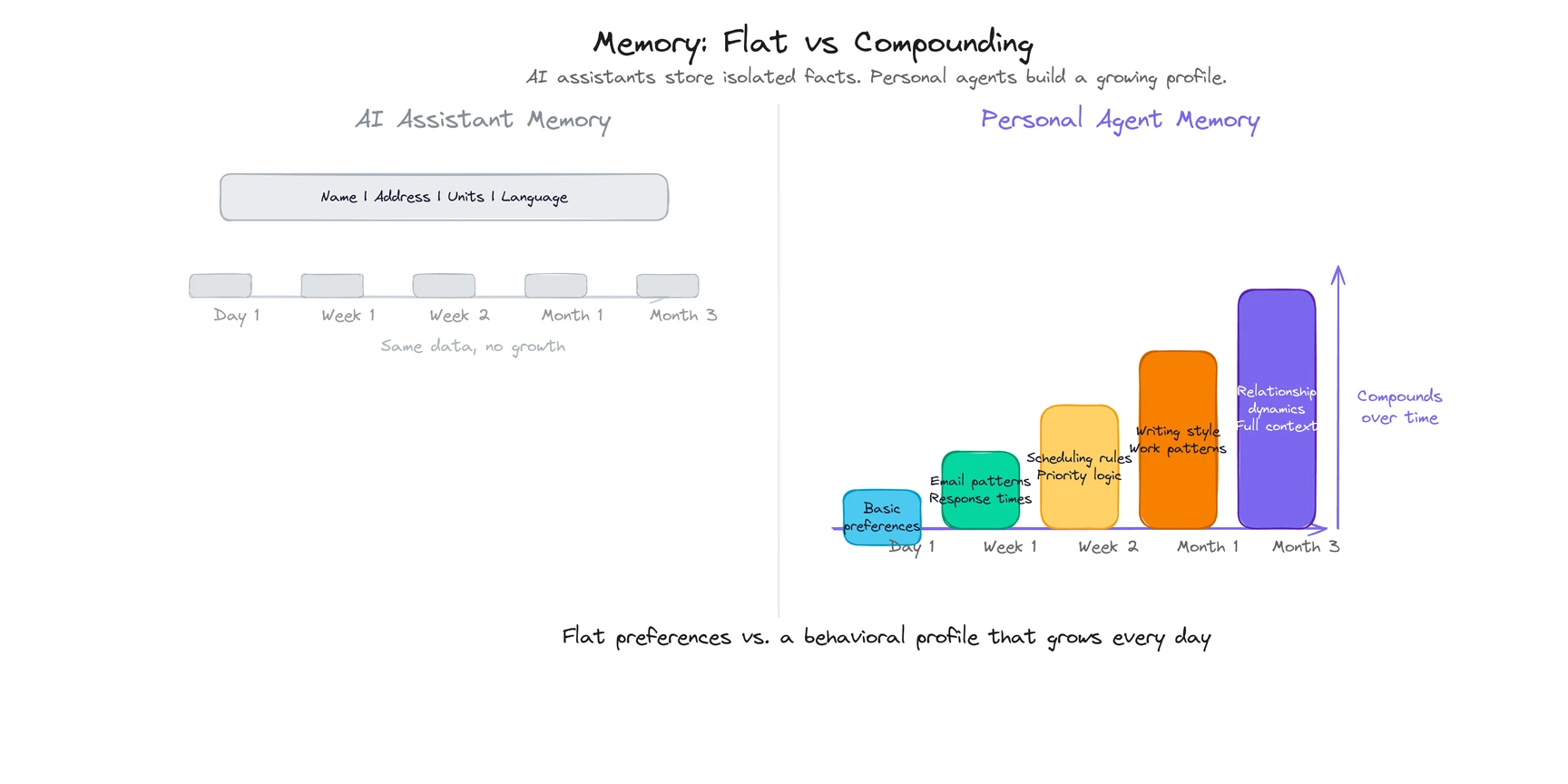

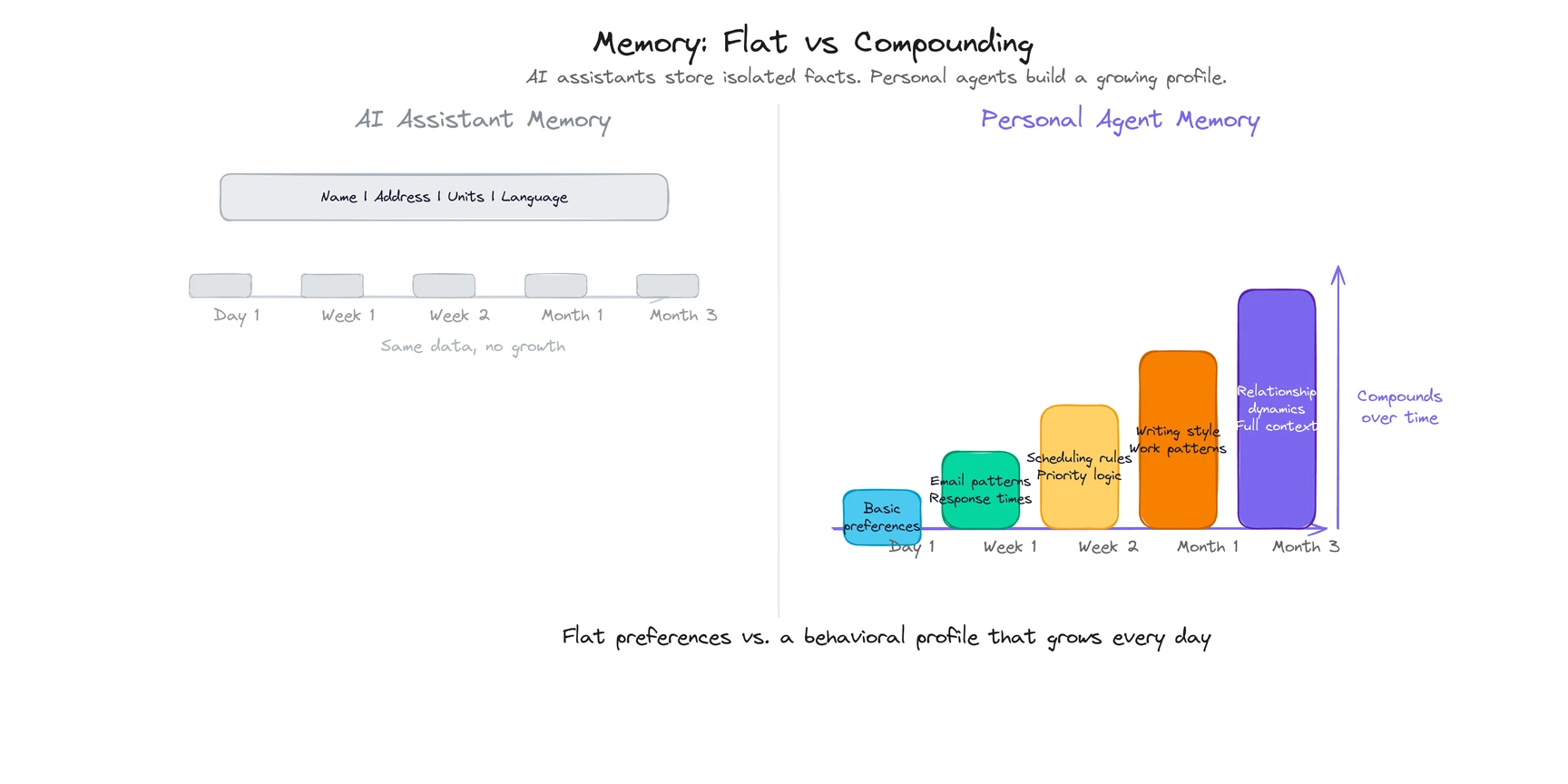

AI assistants store preferences as isolated facts. Your name. Your home address. Your preferred temperature units. That data is flat. It doesn’t grow. It doesn’t connect patterns across weeks.

A personal agent treats memory as a compounding system. Products like ego build preference profiles from browsing behavior, desktop activity, and interaction patterns across sessions. After a week, the agent handles routine emails without asking. After a month, it starts anticipating what you need before meetings. Not because the AI model got smarter, but because it accumulated enough context about you to make good calls.

Here’s a concrete comparison:

| Memory dimension | AI Assistant | Personal Agent |

|---|

| What you’ve said | Forgotten after the session (or stored as thin notes) | Kept across all sessions — tasks assigned, decisions explained, feedback given |

| How you behave | Not tracked | Learned by watching — which emails you respond to first, what times you’re productive, which meetings you stall on |

| What it’s inferred | Basic profile (name, location) | Your role, projects, communication style, relationship dynamics |

| What you’ve corrected | Lost | Permanent — tell it your CFO spells his name “Geoff” not “Jeff,” and it won’t get it wrong again |

That last row is the one people underestimate. Every correction makes the agent permanently better. The learning curve isn’t gradual. It’s steep. (For a technical walkthrough of how these memory layers feed into the agent’s reasoning loop, see How Do Personal Agents Work?)

What about ecosystem lock-in?

Siri knows Apple. Google Assistant knows Google. Each is excellent inside its own boundaries and lost outside them.

This isn’t a criticism. It’s a business model. Apple built Siri to sell iPhones. Google built Assistant to keep you inside Search and Maps. Amazon built Alexa to move products and control smart homes. The assistant is the voice of its platform. It was never designed to understand your work across platforms.

A personal agent drops the ecosystem concept entirely. It operates wherever you work: Gmail and Outlook, Google Calendar and iCal, local files and cloud storage, Chrome tabs and desktop apps. It connects information across those sources the way a good executive assistant would, because it doesn’t owe its loyalty to any one platform.

The awkward truth is that platform companies have a structural conflict here. A truly useful personal agent would route you to whatever tool is best for the task, even a competitor’s tool. Siri will never recommend Google Maps, even when it has better transit directions for your route. Google Assistant will never suggest you use Apple Notes instead of Google Keep. The assistant serves the ecosystem first and you second.

A personal agent with a subscription business model doesn’t have that conflict. It gets paid by you. It serves you.

That’s the alignment question nobody talks about.

See what cross-platform action actually looks like →

When should you use an AI assistant vs a personal agent?

Honest take: you’ll probably use both. They solve different problems at different scales.

Use an AI assistant when:

- You need a quick factual answer (“What time is my next meeting?” “How tall is the Eiffel Tower?”)

- You want hands-free device control — phone, smart home, car

- The task is one step: set a timer, play a song, convert a measurement, send a quick text

- Context from previous interactions doesn’t matter

AI assistants are fast, voice-activated, and free. For quick lookups and device control, nothing beats them.

Use a personal agent when:

- Your work involves coordination across multiple tools

- You’re spending hours per week on tasks that require context but not creativity (inbox triage, scheduling, status updates, follow-ups)

- You want AI that compounds over time instead of resetting every session

- You need the AI to do things, not describe what you could do yourself

The dividing line: if you need the AI to answer, use an assistant. If you need it to act, you need a personal agent.

The future probably looks like coexistence. AI assistants handle quick, voice-first, device-level tasks. Personal agents handle the complex, context-rich, cross-platform work that currently eats up your day. One is a remote control for your devices. The other is a colleague who knows your work.

Explore how ego works as a personal agent →