I keep a dog-eared copy of Bill Gates’ The Road Ahead on my shelf. Page 103 describes a piece of software that learns your interests, schedules your meetings, negotiates on your behalf, and talks to other people’s software agents while you sleep. He published that in 1995. The web browser was a year old.

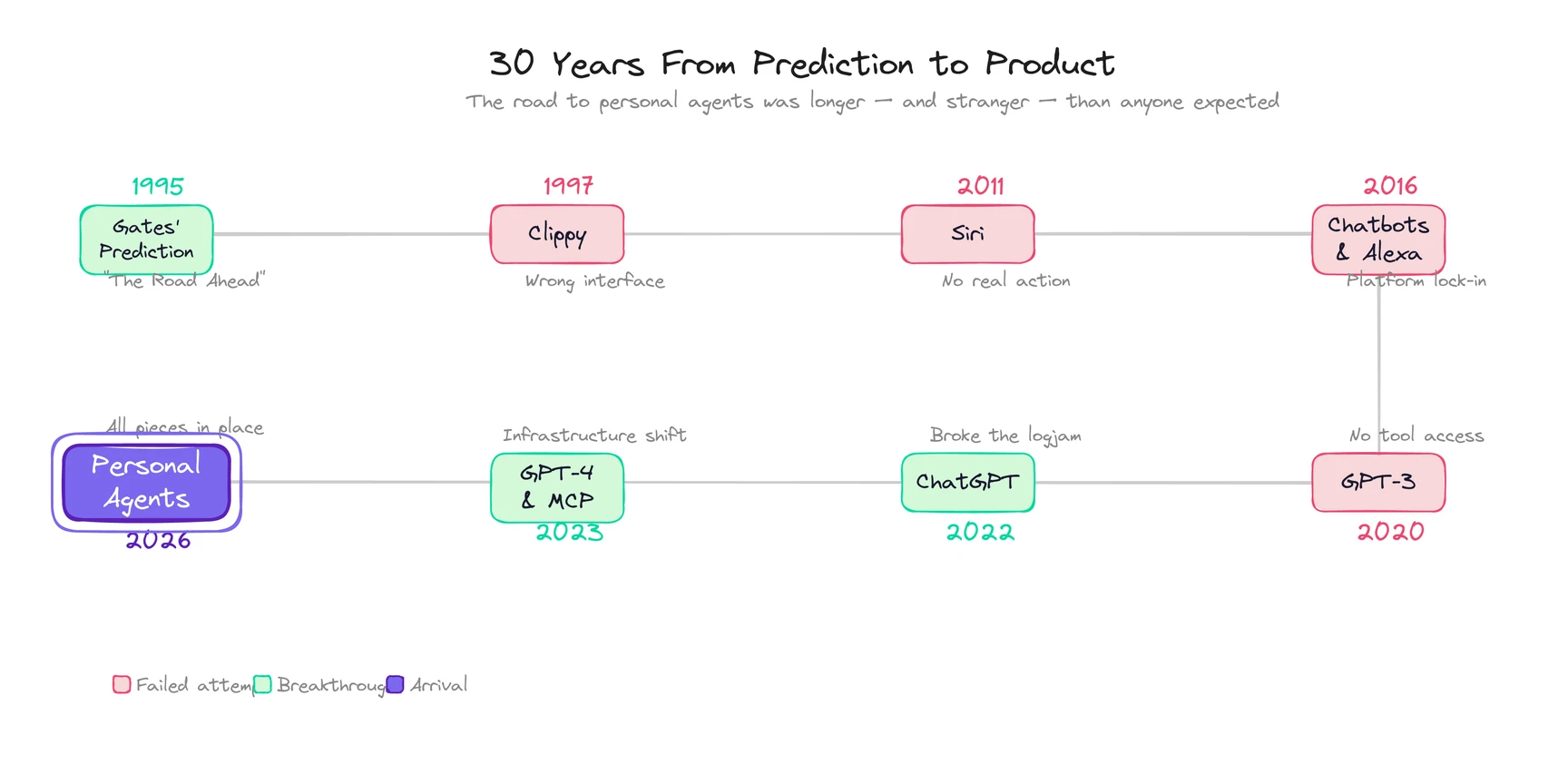

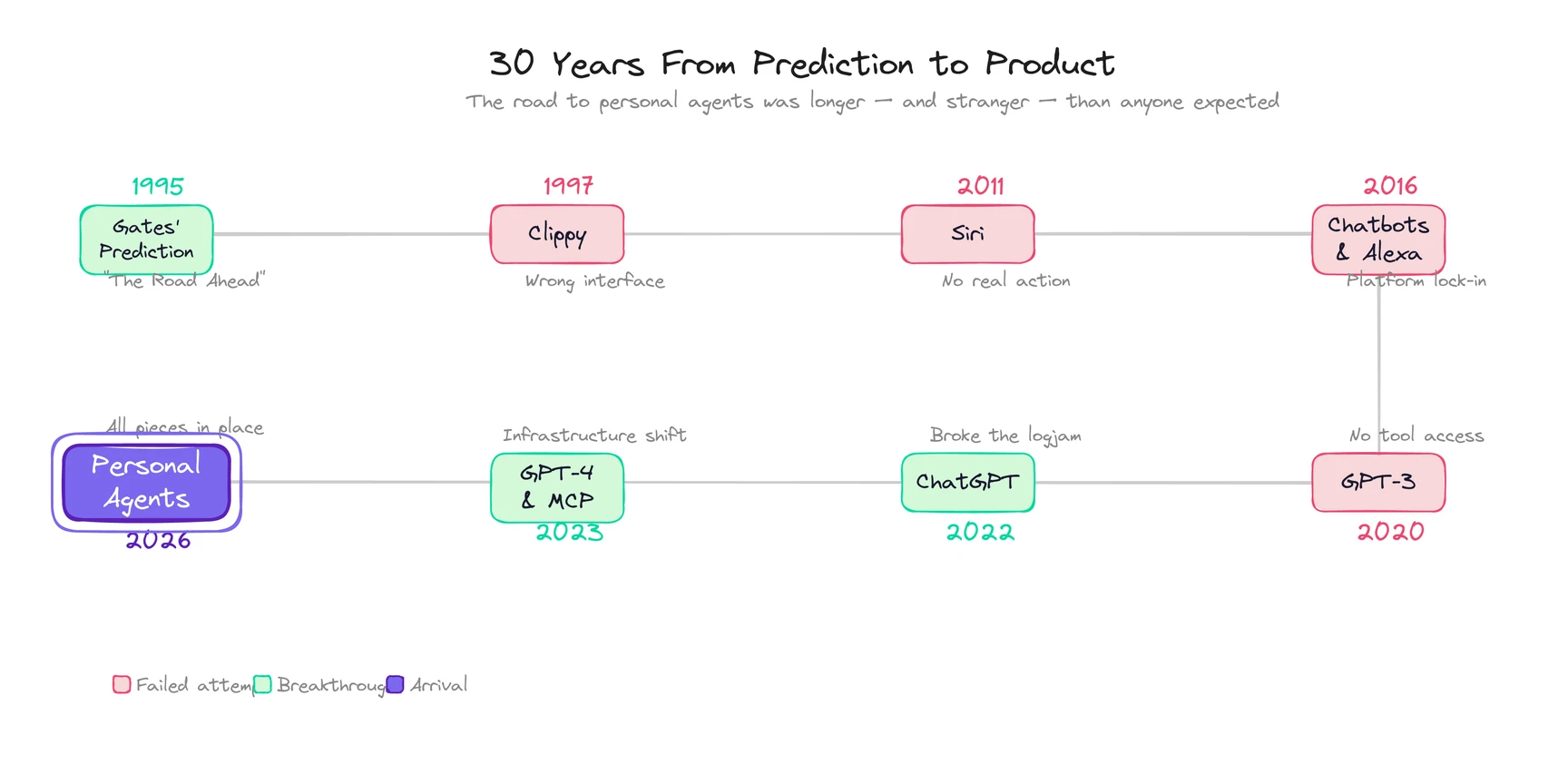

Thirty years later, that paragraph is a product category. A personal agent is an AI that maintains persistent knowledge of you, calls tools to act on your behalf, and compounds in value the longer you use it. The idea didn’t change. The technology caught up. But the three decades in between are a graveyard of attempts that got one piece right and everything else wrong. That history is worth knowing, because it explains why the thing that’s arriving now is different from everything that came before.

What did Bill Gates predict in 1995?

Most tech predictions age badly. This one aged like a blueprint.

In The Road Ahead, Gates described a future where every person would have a “personal agent” living on the network. Not a search engine. Not a voice command interface. An agent, a piece of software that would learn your preferences by watching your behavior, act on your behalf without waiting for instructions, negotiate with other people’s agents, and stay exclusively aligned with your interests rather than an advertiser’s.

He was specific about four things:

The agent would learn by observation. No profile forms. No preference quizzes. It would watch how you spent time and infer what you cared about.

It would act, not just inform. Buy tickets. Respond to messages. Schedule appointments. The output wasn’t text. It was completed tasks.

It would be yours. Aligned with you, not the platform hosting it. Your data, your priorities, your rules.

It would talk to other agents. Your agent negotiating meeting times with your colleague’s agent. A network of agents coordinating tasks across people.

In 1995, none of the enabling technology existed. No cloud computing. No machine learning at scale. No smartphones. Google was two Stanford students with a garage and an idea. Gates was describing something that wouldn’t be buildable for three decades.

But the definition was already right. Compare what he wrote in 1995 to how the personal agent category is defined today. The concept barely moved. Everything else had to catch up.

Why did every early attempt fail?

The personal agent idea didn’t disappear after 1995. It resurfaced every few years, each time wearing a new costume, and each time crashing into the same wall.

Clippy (1997). Microsoft shipped the Office Assistant and accidentally created the most famous failed agent in computing history. Clippy watched what you typed and offered suggestions. The problem: it couldn’t actually reason. Its pattern matching was crude, its interruptions constant, and its suggestions wrong often enough to be infuriating. Users hated it. For a decade after, the phrase “intelligent assistant” carried the stink of a paperclip asking if you needed help writing a letter.

(I sometimes wonder how much Clippy set the whole field back. A generation of product managers learned that proactive software was annoying. That lesson was wrong, but it stuck.)

Siri (2011). Apple put a voice-activated assistant on the iPhone 4S, and for a moment it felt like Gates’ vision was arriving. Millions of people could talk to their phone and get things done. But Siri wasn’t a personal agent. It handled one-shot commands inside Apple’s ecosystem. It didn’t learn your preferences over time. It couldn’t take multi-step autonomous action. It didn’t work across platforms. Siri was a voice interface bolted onto a search engine. Useful. Not an agent.

The chatbot wave (2014-2016). Facebook launched Messenger Platform, and the prediction was that chatbots would be the next apps. Thousands were built. Almost all of them were scripted decision trees wearing a conversational mask. By 2017, the wave had crashed. Another generation concluded that AI agents weren’t ready.

Alexa and Google Assistant (2016-2019). Smart speakers got good at factual questions and smart home control. But they shared Siri’s limits: reactive, ecosystem-locked, session-based. You could ask them to set a timer. You couldn’t ask them to manage your week.

GPT-3 (2020). OpenAI released a language model that could generate coherent text across a wide range of tasks, and developers started imagining what would happen if you connected that reasoning to real-world actions. But GPT-3 couldn’t call tools, couldn’t browse the web, and forgot everything between prompts. The reasoning engine existed. The agent framework didn’t.

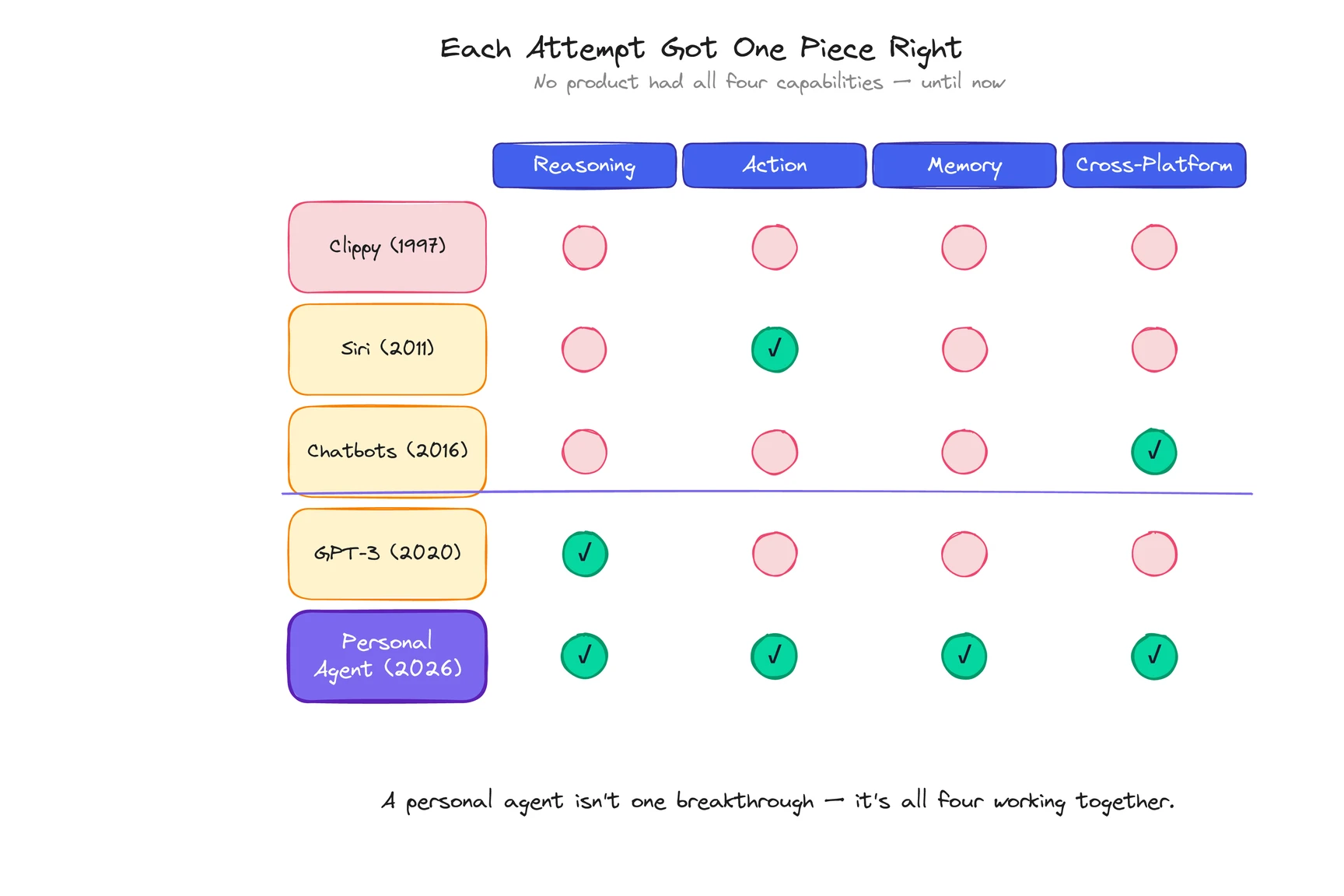

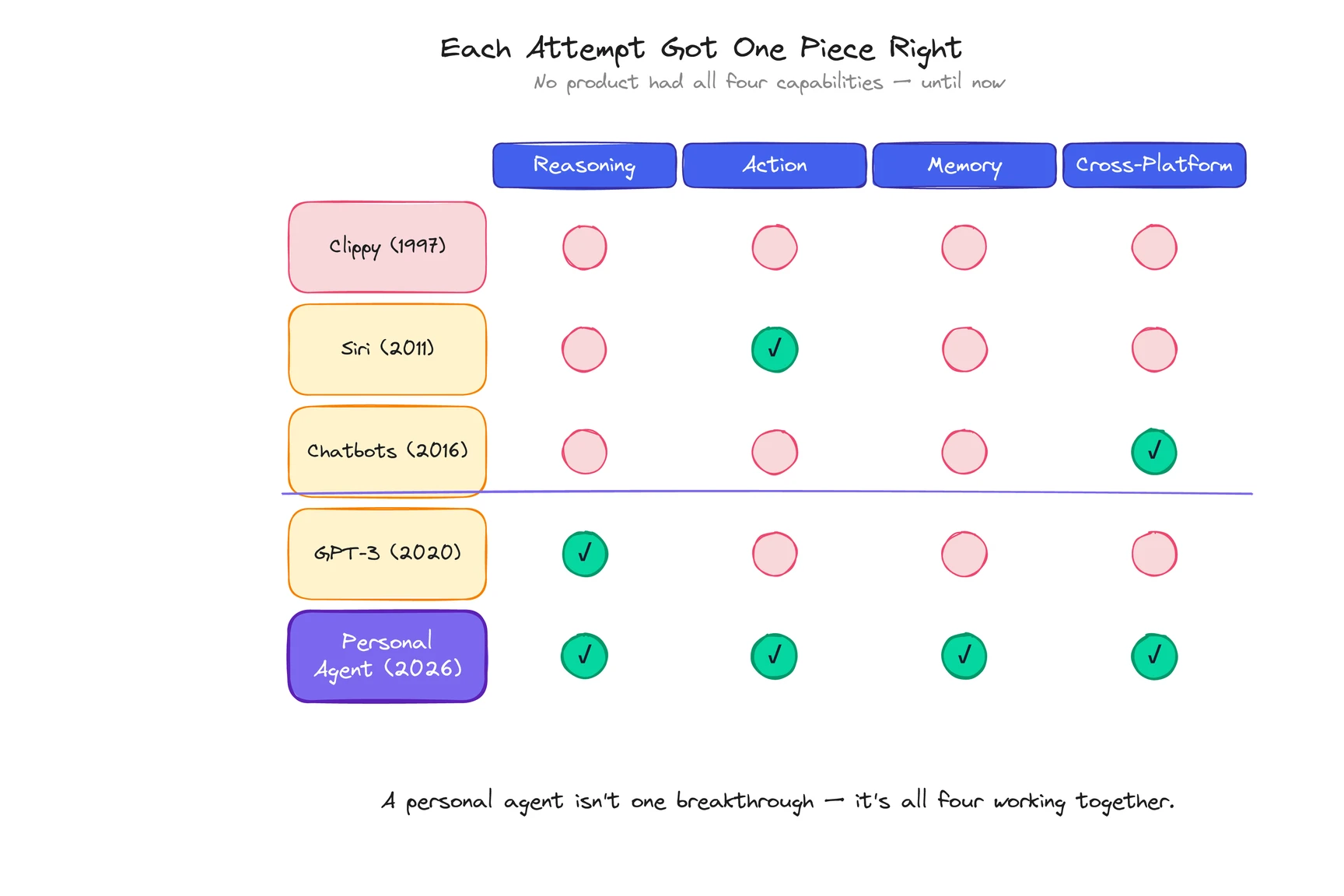

Here’s the pattern. Every attempt got one piece right and missed the rest. Clippy had proactivity but no intelligence. Siri had intelligence but no action scope. Chatbots had conversation but no memory. GPT-3 had reasoning but no tools.

A personal agent needs all four: reasoning AND action AND memory AND cross-platform access. For 27 years, no technology stack could deliver all of them at once.

That changed fast.

What broke the logjam in 2022-2023?

Two events, five months apart, cracked the problem open.

November 2022: ChatGPT launched. Within 60 days it had 100 million users, the fastest consumer product adoption in history at that point. The public suddenly understood what a large language model could do. More importantly for the agent story, developers saw that LLMs could reason through complex tasks, maintain context within a conversation, and respond in natural language. The reasoning layer that Gates’ 1995 vision required was suddenly available as an API anyone could call.

March 2023: GPT-4 shipped with tool calling. This was the real breakthrough, and most people missed it in the noise around GPT-4’s raw intelligence. GPT-4 didn’t just generate text. It could call external tools: browse the web, execute code, query databases, interact with APIs. For the first time, an AI system could reason and act through the same interface. The architectural wall that stopped every previous attempt, the one that forced a choice between understanding language and taking action, was gone.

March 2023: AutoGPT went viral. A developer named Toran Bruce Richards released an open-source experiment that chained GPT-4 calls in a loop. Given a goal, it would break it into sub-tasks, execute them, evaluate results, and iterate. AutoGPT was unreliable. Most tasks failed. But it captured something: the idea that AI could pursue goals autonomously, not just answer questions. The project hit 100,000 GitHub stars in two weeks.

November 2023: Gates revisited his prediction. On GatesNotes, he wrote that AI agents would be the most important software revolution since the graphical interface. “Whoever wins the personal agent,” he said, “that’s the big thing.” The paragraph from page 103 was suddenly a race with billions of dollars behind it.

Learn what a personal agent actually does today →

What three infrastructure shifts made personal agents real?

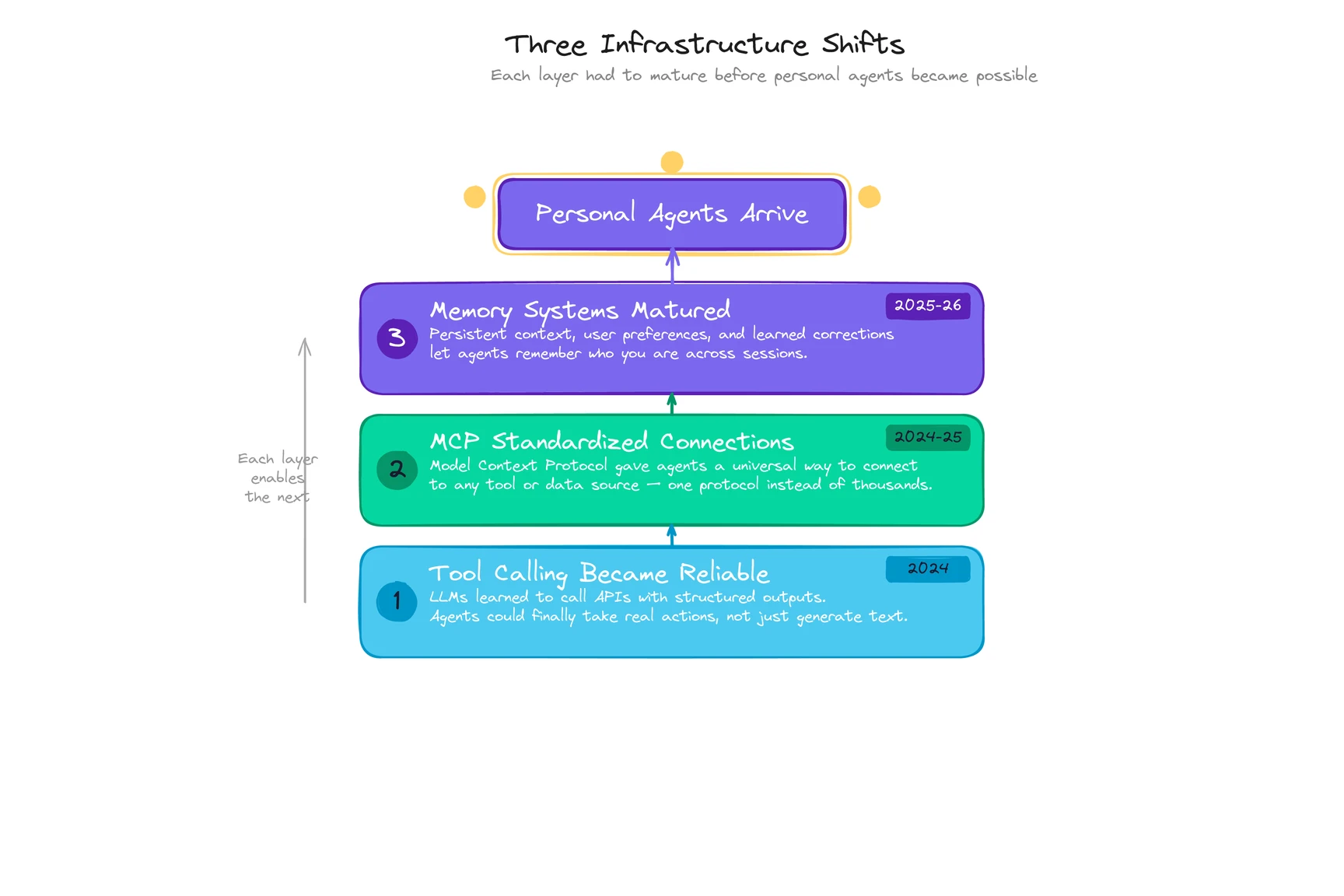

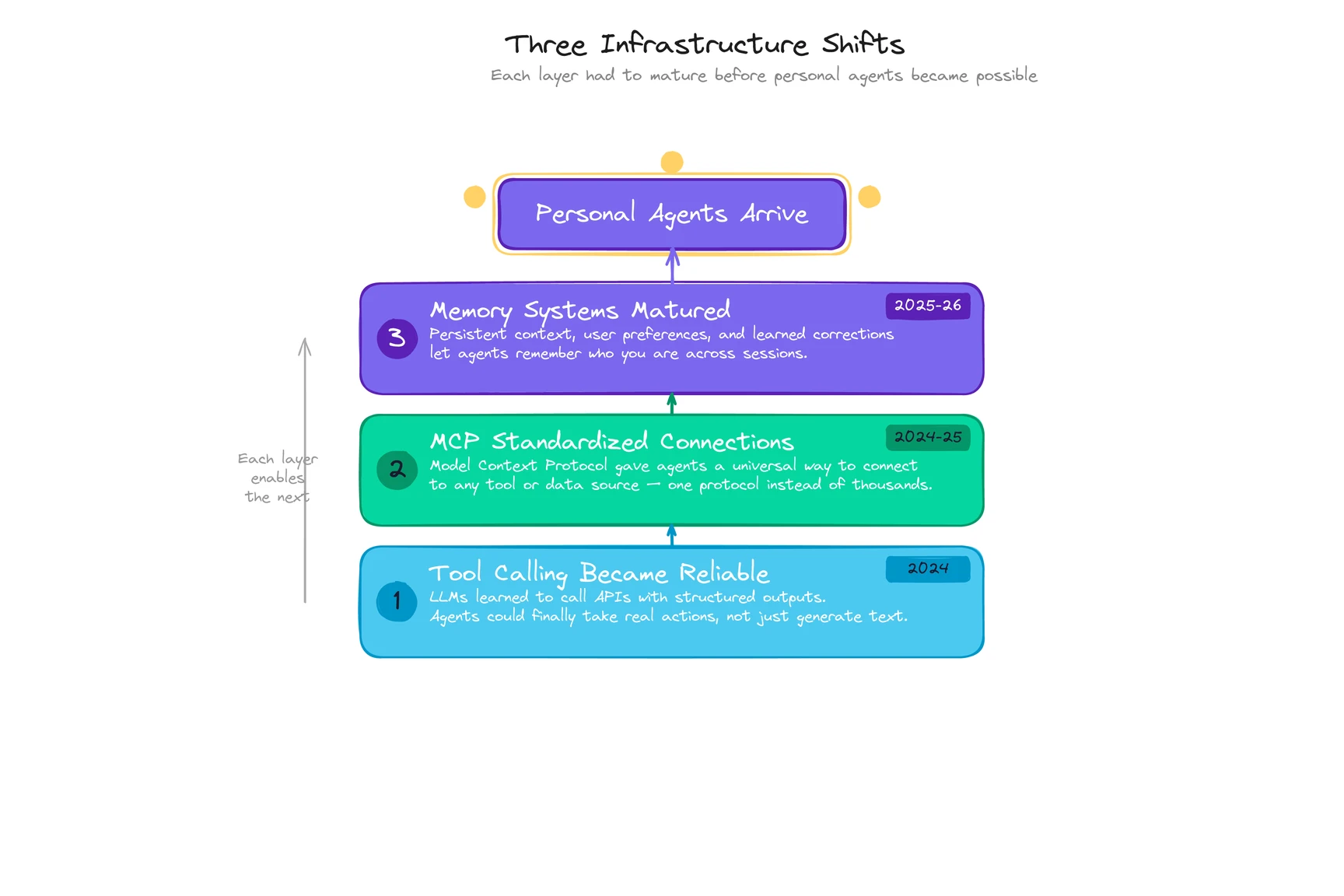

Having a reasoning engine that could call tools was necessary. It wasn’t sufficient. Between early 2024 and early 2026, three quieter shifts turned “AI agent” from a demo concept into a product people actually used.

Shift 1: Tool calling became boring. In 2023, connecting an LLM to external tools was brittle, unpredictable, the kind of thing that worked in a demo and broke in production. By mid-2024, every major model API shipped tool calling as a standard feature. GPT-4o, Claude 3, Gemini 1.5 all handled it reliably. The model could decide which tool to use, format the right parameters, and process the result. This sounds incremental. It was the plumbing that made everything else possible.

Shift 2: MCP standardized the connections. Anthropic released the Model Context Protocol in late 2024, creating an open standard for how agents connect to external tools and data sources. Before MCP, every integration was custom-built. A different connector for email, another for calendar, another for your CRM. After MCP, a single protocol handled all of them. By early 2026, thousands of MCP servers existed. Shopify, Salesforce, and Stripe offered native endpoints. MCP did for agent integrations what USB did for peripherals: turned a wiring problem into a plug-and-socket problem.

Shift 3: Memory systems matured. Early agents had no memory between sessions. You’d explain your preferences, close the tab, come back, and start from scratch. (Sound familiar?) By 2025, products started building persistent memory architectures: vector databases for retrieval, structured knowledge graphs for user context, correction logs that learned from your feedback. Memory is the thing that turns an AI tool into something personal. Without it, every interaction is a first date. With it, the agent compounds.

These three shifts arrived in sequence, each unlocking the next. Tool calling made action possible. MCP made action scalable. Memory made it personal. (For a technical breakdown of how these components work together in practice, see How Do Personal Agents Work?)

Products followed. Manus launched in early 2025 and showed that a general-purpose agent could handle complex multi-step tasks. Meta acquired it for roughly $2 billion by December. Claude shipped Computer Use, giving the model the ability to click, scroll, and navigate a desktop. And a new wave of products, including ego, started building what Gates described on page 103: personal agents aligned exclusively with one user, operating across their entire digital life.

See what ego is building →

What does the 30-year arc tell us about what comes next?

The pattern from 1995 to 2026 has a clear lesson: the vision was never wrong. The infrastructure was never ready. And when infrastructure catches up, the product emerges fast.

Three things are happening now that Gates’ original prediction anticipated but couldn’t have specified:

Agents will talk to other agents. Gates described a network of personal agents negotiating with each other. Anthropic’s MCP and Google’s A2A protocol are the early plumbing. Your agent scheduling a meeting by coordinating with your colleague’s agent, negotiating time zones and priority levels without either human touching a calendar. It’s happening in limited contexts already.

The definition is still contested. There’s no industry-standard definition of “personal agent” in 2026. AI companies, browser companies, and legacy assistant platforms are all claiming the term. The products are architecturally different, but the marketing language is converging. This is normal for a new category. It’s also temporary. The market will pick a winner, and the definition will crystallize around whatever that product looks like.

The uncomfortable truth. Pure tool-shaped agents probably won’t survive as standalone products. As the underlying models improve, any agent that only executes instructions will be absorbed into the operating system, the browser, the email client. What won’t be absorbed is the agent that has evolved alongside you for months. The one that understands not just your preferences but your judgment. The agents with staying power will be the ones that developed something personal along the way.

Gates gave this a five-year window from November 2023. That puts widespread adoption around 2028. The smartphone followed a similar curve: 2007 to 2012, from novelty to necessity. We’re somewhere around 2009 in that analogy. The early products work. Most people haven’t tried one. The ones who have aren’t going back.

![Cover image for What Is a Personal Agent? Definition, Examples, and How It Works [2026]](/images/blog/what-is-a-personal-agent-cover.svg)